Motion Patterns

The motion patterns are defined by constraints, which relate the 3D motion of a system to local image motion measurements ( P1, P2, P3). The idea is that local robust image measurements - the movement of local edges (called normal flow) - form patterns in the image plane, whose location denotes the 3D motion. While classically the 3D motion estimation problem has been considered a reconstruction problem requiring accurate local image measurements and numerical robust techniques, these constraint allow formulating the problem as a simple pattern matching problem. On the basis of these constraints different biological feasible algorithms can be designed, possibly also including additional inertial sensor information, which also can be used directly as input in a visual control servo-mechanism ( P4).

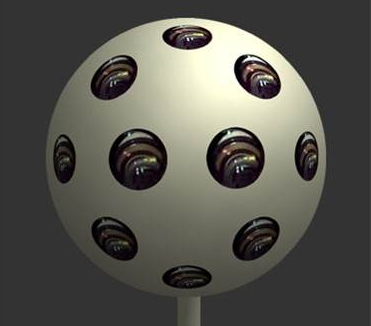

Camera Design principles

Analaysis of how the the design of cameras influences the estimation of motion and structure from video led to a few theoretical insights. First, I showed that the precision with which one can estimate the 3D motion, depends on the camera’s field of view. While for a full field of view camera the optimization involved in solving the estimation is well-behaved, for a classical planar camera there is a consistent ambiguity ( C1, C2). Subsequently, we developed camera prototypes approximating spherical eyes and software solutions to better solve for motion ( C3). Second, we showed that the ideal moving sensor for structure estimation would sample the light coming from the scene at multiple imaging surfaces, and a camera that implements these principle leads to fast and robust algorithms for 3D Photography ( C4, C5).

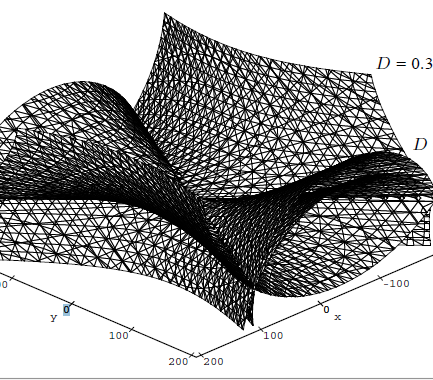

Visual distortion of space

We asked the question of how the estimation of motion and structure are related. Since in general it is not possible to accurately estimate 3D motion, what are the effects of errors in estimating viewing geometry (motion or stereo) on the estimated depth? Our framework in ( D1, D2) describes the transformation from actual depth to estimated distorted depth. This framework allowed us not only to predict the findings of many existing psychophysical findings, but also gave rise to new 3D motion estimation and calibration algorithms ( D3, D4).

Linking of motion fields

As a robot moves through the scene, a video is recorded on its camera. From consecutive image frames in the video we compute the image motion field, which serves as input to any further processing. Most image-motion based algorithms only use single flow fields, but there is additional information about the scene structure, which comes from two or more consecutive flow fields. However, it is difficult to use this information. Previous constraints enforced the structure of the scene to be the same in two consecutive flow fields leading to equations where structure and motion parameters are intertwined and thus resulting in ill-posed estimation problems. In ( L1) we introduced a constraint for combining the information in multiple consecutive image motion fields that enforces the shape of the scene as opposed to its depth to be the same in consecutive frames. This constraint allows for a separation of the parameters due to rotation and translation and was implemented in a stable algorithm for motion and structure estimation.

Limitations to Image Motion

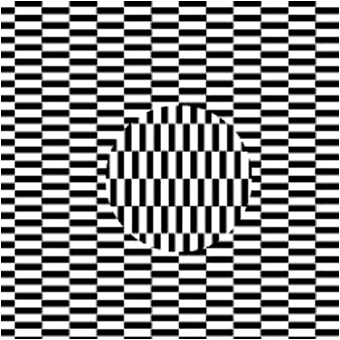

The early representation for visual motion is the image motion field. Its computation can be modelled as a form of estimation. However, beacuse of physical constraints, image motion cannot always be estimated accurately. Two of the these limitations

are:

(1) Noise in images causes statistical bias, and as a result mis-estimation of image features such as lines, intersections of lines and local image movement

(I1,

I2,

I3). This can explain many geometric optical illusions, illusory patterns due to motion signals, and the perceptual effect of underestimation of 3D shape observed in psychophysical experiments. We also showed how to best deal with the

bias in Computer Vision problems (

I4,

I5)

(2) The model for motion processing in humans is based on energy filters. Because biological vision has to be real-time, the spatial components of the filter are symmetric, but the temporal components are asymmetric (causal). In other words,

only the past but not the future is used to estimate the temporal energy. We showed that such filters mis-estimate image motion at certain resolutions and this can account for illusory motion perception in a new class of static patterns (a

prominent pattern is called “Rotating Snakes”) and explain the dependency of the perception on the resolution of the patterns (

I6).