Data science is hard. Wouldn’t it be cool if we could automate the most labor intensive parts? Is it possible to build intelligent systems that can not only learn from examples, but can also help us build other intelligent systems?

In my research group, we’ve been tinkering with machine learning techniques to solve security problems. For example, we trained classifiers to forecast which vulnerabilities will be exploited in the wild, based on information from Twitter, and to detect malware by analyzing the download patterns of executables on end hosts. A machine learning classifier automatically learns models of malicious activity from a set of known-benign and known-malicious observations, without the need for a precise description of the activity prepared in advance. To get these classifiers to work well, we had to scratch our heads to come up with good features that set apart the benign and malicious data points. Should we incorporate domain specific information about vulnerabilities in addition to Twitter features? (yes, it turns out) Should we consider single download events or should we build more complex models, like graphs? (graphs work better) Is the graph structure interesting enough, or should we look into how these graphs evolve? (we should) This feature engineering process is key to the success of any machine learning project, and it underscores the value that data scientists bring to the table.

papers.](/~tdumitra/images/wordCount.png)

New terms are introduced at a constant rate in Oakland papers.

But feature engineering has been the most difficult and time consuming part of our security research. It is common for large projects to invest many person-months in identifying and incorporating informative features, and more information about malware and attacks becomes available each year. For example, about 100,000 research papers have been published about malware and over 600,000 about intrusion detection. On top of that, the security community seems to adopt new technical terms at a constant rate. This is probably a result of the security arms race, where hackers keep coming up with new attacks and behaviors, to escape detection. This means that, over time, it gets more and more difficult for analysts to assimilate all the relevant technical details, and manual feature engineering likely draws from a fraction of the knowledge available.

To escape long hours of reading, we built a system, called FeatureSmith, for automatically engineering features to detect malware. In our paper [CCS 2016] we show how to do this by distilling the knowledge from thousands of research papers. We found that it is not enough to extract all the possible malware terms mentioned in text, or to rank them by their frequency. To discover informative features, we had to mirror the human feature engineering process.

We don’t know how analysts come up with feature ideas, but we can look

at how they describe this process.

Drebin is a state of the art malware detector for Android, which uses a classifier with 545,334 features to distinguish benign and malicious apps.

These features include 315 Android API calls considered suspicious. Here is how the Drebin authors justify choosing these calls

out of the 20,000+ from the Android API: “API calls for accessing

sensitive data, such as getDeviceId() and getSubscriberId()”, “API calls for sending and receiving SMS messages, such as

sendTextMessage()”, or “API calls frequently used for obfuscation, such as Cipher.getInstance()”. In other words, researchers first

identify some high level malware behaviors and then they choose concrete features, like API calls, that they can record experimentally and that may reflect those behaviors. The knowledge needed to do this is

scattered across many research papers and industry reports. To engineer features automatically, we must understand the hidden meaning of the words these documents use to describe malware behavior. For example, a human analyst would probably understand that Android malware is

interested in sensitive data and that it may want to send text messages, perhaps after obfuscating them. However, this conclusion is based on the analyst’s knowledge of the security field, as the textual description does not provide sufficient linguistic clues that the behavior is malicious.

Artificial intelligence researchers call this the commonsense reasoning problem.

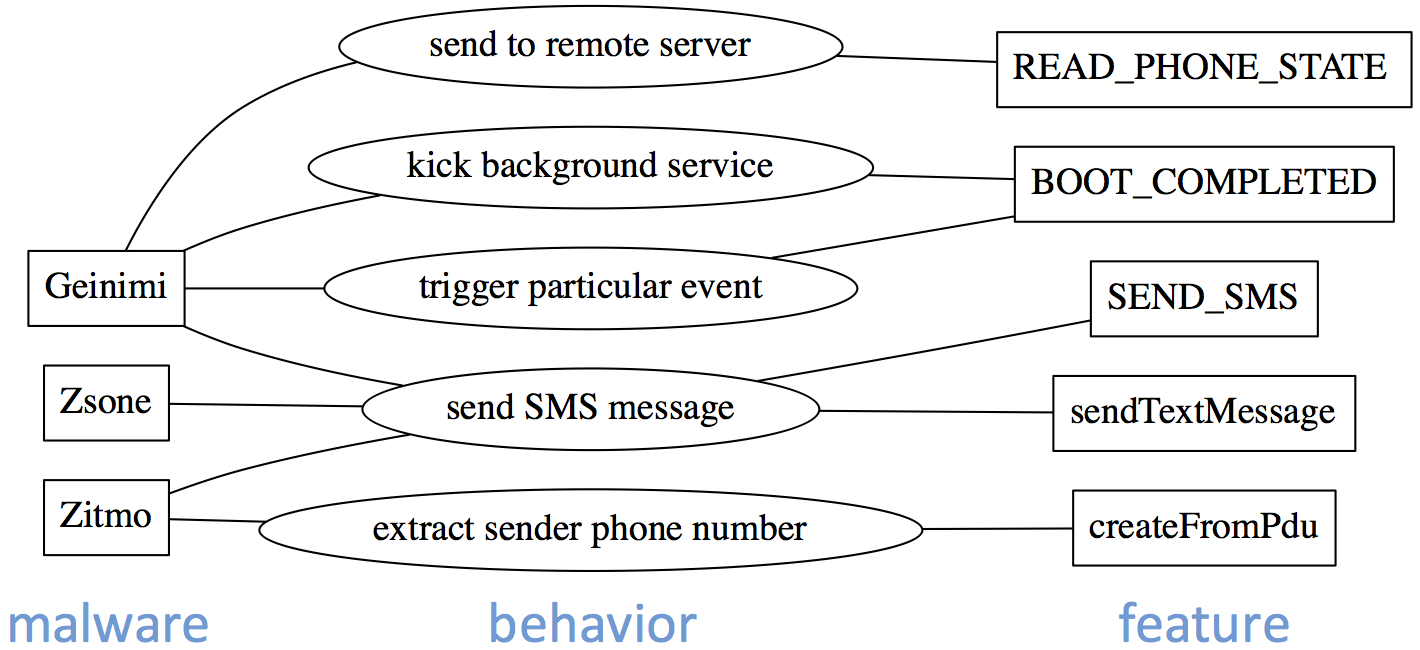

Snippet from FeatureSmith’s semantic network.

FeatureSmith starts by extracting candidate malicious behaviors using heuristics based on the dependency structure of sentences. Our system then builds a semantic network, which links the behavior candidates to known malware families and to concrete malware features, which can be extracted directly from apps using static analysis tools. The nodes of our network correspond to concepts (malware families, behaviors and features) discussed in the papers and the edges connect related concepts. FeatureSmith weights edges according to their semantic similarity, estimated based on how close the concepts are in the text and the frequency of these co-occurrences. This approach for discovering related concepts in natural language takes advantage of the cognitive process of semantic priming): effective descriptions first mention semantically related terms, and then discuss increasingly less relevant concepts. With this approach we can discover an open-ended collection of malware behaviors. IBM’s Watson question answering system also used a semantic network internally, but our network has a different structure.

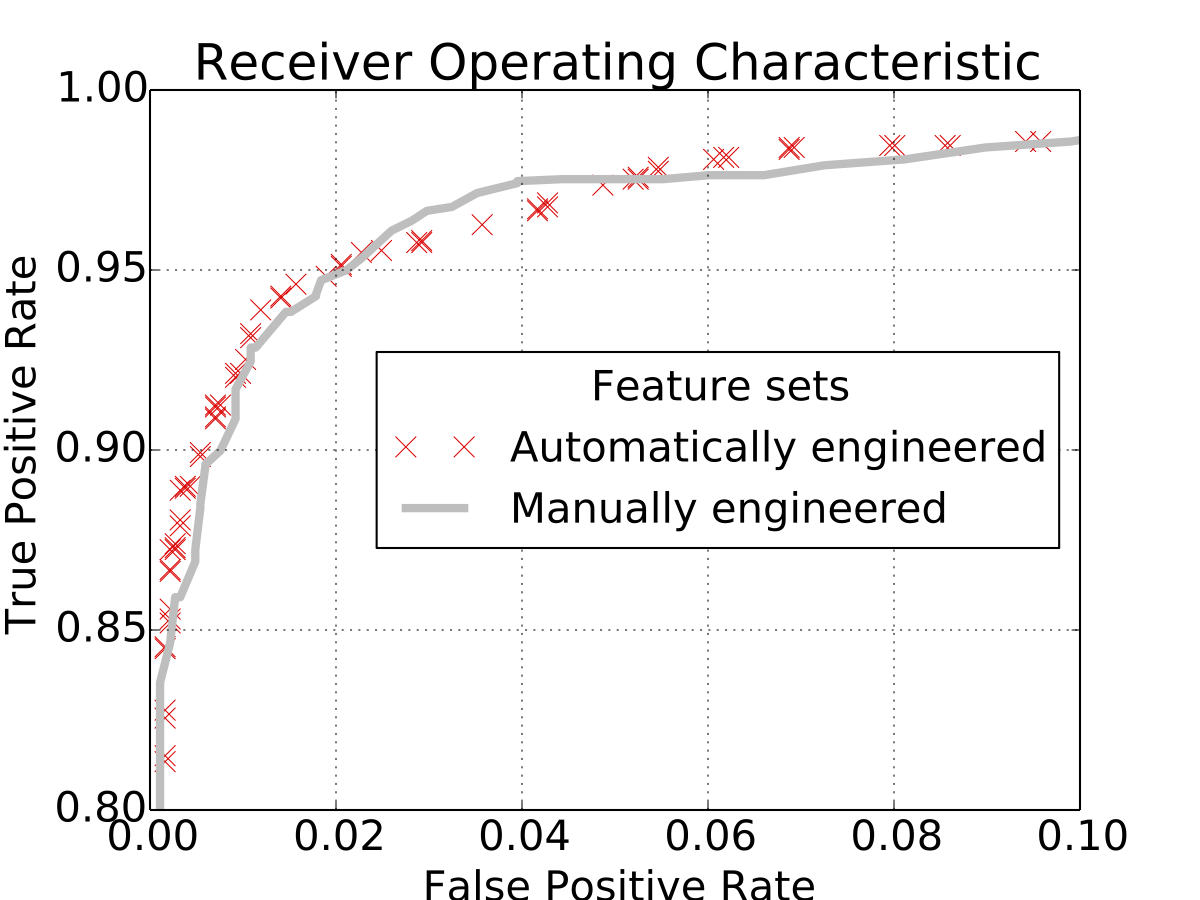

Android malware detection with manually and automatically engineered features.

We used FeatureSmith to generate a feature set for detecting Android

malware by mining 1068 papers from security journals and conferences.

FeatureSmith discovered 195 features that were close to Android malware

on the semantic network. We trained a classifier using these features on

a large data set of benign and malicious apps, and we compared it to

another classifier that used Drebin’s much larger feature set. The two

feature sets had the same detection performance: 92.5% true positives

for only 1% false positives. In addition, FeatureSmith engineered some

informative features, such as API calls getSimOperatorName() and

getNetworkOperatorName(), which are absent from the Drebin feature set but are nevertheless often invoked by malware. The details of our

implementation and more experimental results are in the paper [CCS 2016].

We did not design FeatureSmith to replace human analysts with automated tools, in case anyone worries about that. Cyber attackers are inventive, and manual feature engineering benefits from human intuition—a mechanism we don’t yet know how to simulate. Instead, automatic feature engineering draws from thousands of documents, can link related concepts across different papers, and allows security analysts to benefit from the entire body of published research. Along with our paper, we are releasing FeatureSmith’s semantic network and the automatically engineered features, at www.featuresmith.org; we hope that this will stimulate furter research into automatic feature engineering.

Paper: [CCS 2016]

Dataset: www.featuresmith.org

Presentation:

References

[CCS 2016] Z. Zhu and T. Dumitraș, “FeatureSmith: Automatically Engineering Features for Malware Detection by Mining the Security Literature,” in ACM Conference on Computer and Communications Security (CCS), Vienna, AT, 2016.

PDF